At Screenly, we have a long track record of adopting new technologies (where it makes sense). For instance, we were both early adopters of Docker back in 2014 (we even later wrote a guest post), and then later Kubernetes back in 2016.

Behind the scenes, we keep improving our software stack. In more recent time, we’ve adopted Rust for certain workloads on our digital signage players and PostGREST to power parts of our API (more about this in a later blog post).

In today’s article we’ll discuss another piece of technology that we recently adopted, namely Cloudflare Worker. For those not familiar with Cloudflare Workers, it’s a serverless framework that allows you to easily deploy simple services to Cloudflare’s edge workers. This does not only improve the performance to the end user, but in our case, radically reduces the cost of hosting the app.

Background

Some time ago, we created a set of sample apps for Screenly. These apps were intended to help our customers with some nice looking demo content for their screens when they first got started. However, we also open sourced these apps such that customers could modify them to their own needs if they so desire. We got a collection of apps like this in our Playground on GitHub.

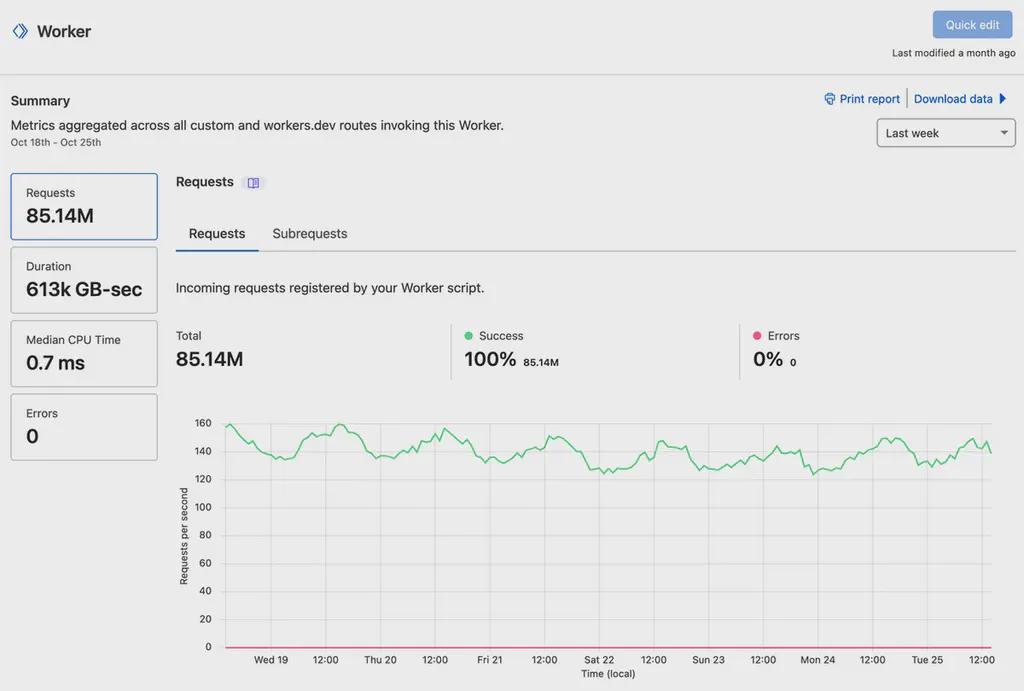

Two of the most popular apps were our clock and weather. To give you a sense of how popular these are, the weather app alone generates about 360M requests per month to the backend (that serves and caches weather data). Prior to moving to Cloudflare Workers, we had a dedicated Kubernetes cluster just to serve this backend.

The Move to Workers

During the summer, we decided that it was time to give both the clock app and weather app some overdue TLC. A new design was created, and we decided to take the opportunity to reassess the hosting of our apps. After exploring multiple options, we decided to move the backend to Cloudflare Workers.

Rewriting the previous Python/Flask app into JavaScript/Hono took a bit of effort, but the result was well worth it.

Not only did migrating to Workers save us money, it also significantly reduced the operational overhead. We were able to sunset the old Kubernetes cluster, while still serving content to our customers at lower latency.

One tricky part when running a workload like this on edge servers is caching. While Workers do support central caching through Key Values, when we thought about it, we realized that this wasn’t necessary in our case. Instead, we decided to just cache on the actual edge node. The reason for this is that if a screen requests the weather for say LA, it’s highly probable that they also are in LA and thus will be served by the closest datacenter from there. This meant that we could simplify our app a bit further.

If you’re curious about how this all worked out, or want to modify our weather or clock apps to your own needs, the full source code is available on GitHub under the Screenly organization.

As we keep building out more examples in our Playground, Workers will likely be our new go-to when we write anything that requires a simple server-side component.